98% Less Physical AI Data. Zero loss of operational context.

How I implemented 'delta-keyframe-heartbeat' pattern to Robotics data.

I was simulating a robot fleet to generate meaningful data for my “Physical Context Fabric,” the ChatGPT moment for Physical AI, and I realized that a simulated Turtlebot3 running at 10 HZ, even if I collected every 10th event, generates approximately 1 event/second. That’s 28K events in 8 hours just for one robot, just for odometry and command velocity data. This is already unmanageable for my Knowledge Graph because the signal-to-noise ratio is way too high.

Turtlebot3 simulation after 20 minutes, 20,000+ Event nodes, signal-to-noise ratio unusable.

Imagine the fate of some large-scale Robot fleet operators like Serve Robotics or Waymo who operate hundreds of autonomous robots per day and have to store and process not just odometry data but also LIDAR point cloud, IMU, image/ video, RADAR/SONAR, etc. data. That’s terabytes of data stored on the robot SSDs, waiting to be bulk uploaded when the robot is back at the dock. And by the time the data scientist gets a CSV dump 8 hours later, the trail is already cold, making it difficult to reconstruct what happened, if not impossible.

So the problem with bulk Physical AI data uploads that I see is:

Staleness – batch uploads that you are always looking backward.

Upload costs – TB dumps over spotty connections fail, corrupt, out of order.

Context loss – even when the full data arrives, raw sensor replay answers the “what”, not the “why”.

However, this is not a new or novel problem. This is a decades-old data engineering problem that I have already seen and solved in my 18 years of data career. So I set out to solve the Physical AI data problem, the same way I have solved the Industrial IOT data problem using the tried and tested “Delta-Keyframe-Heartbeat” pattern. In this pattern, we make the Edge IoT, or in this case, the Robot Gateway, intelligent by introducing logic to send only the deltas (change in velocity or direction), periodic key frames that contain the full data state and robot health status as infrequent heartbeats. The delta ensures we conserve bandwidth, the periodic keyframes ensure we don’t lose the robot state in case of connection loss, and the heartbeat keeps us informed that the robot is active and operational.

To be specific about what each frame type in the “delta-keyframe-heartbeat” patterns does.

Delta – Is any kind of change in telemetry data, like position moved or velocity shifted significantly, or robot state changed from moving to stopped, etc. This delta is detected and calculated by the edge gateway and written only when something meaningful changes.

Keyframe – A keyframe is a full state snapshot written periodically (say every 30 seconds), which serves as a ground truth of the robot state.

Heartbeat – An alive ping every 60 seconds or so when there is no delta being generated to communicate the robot’s health, without sharing any real data.

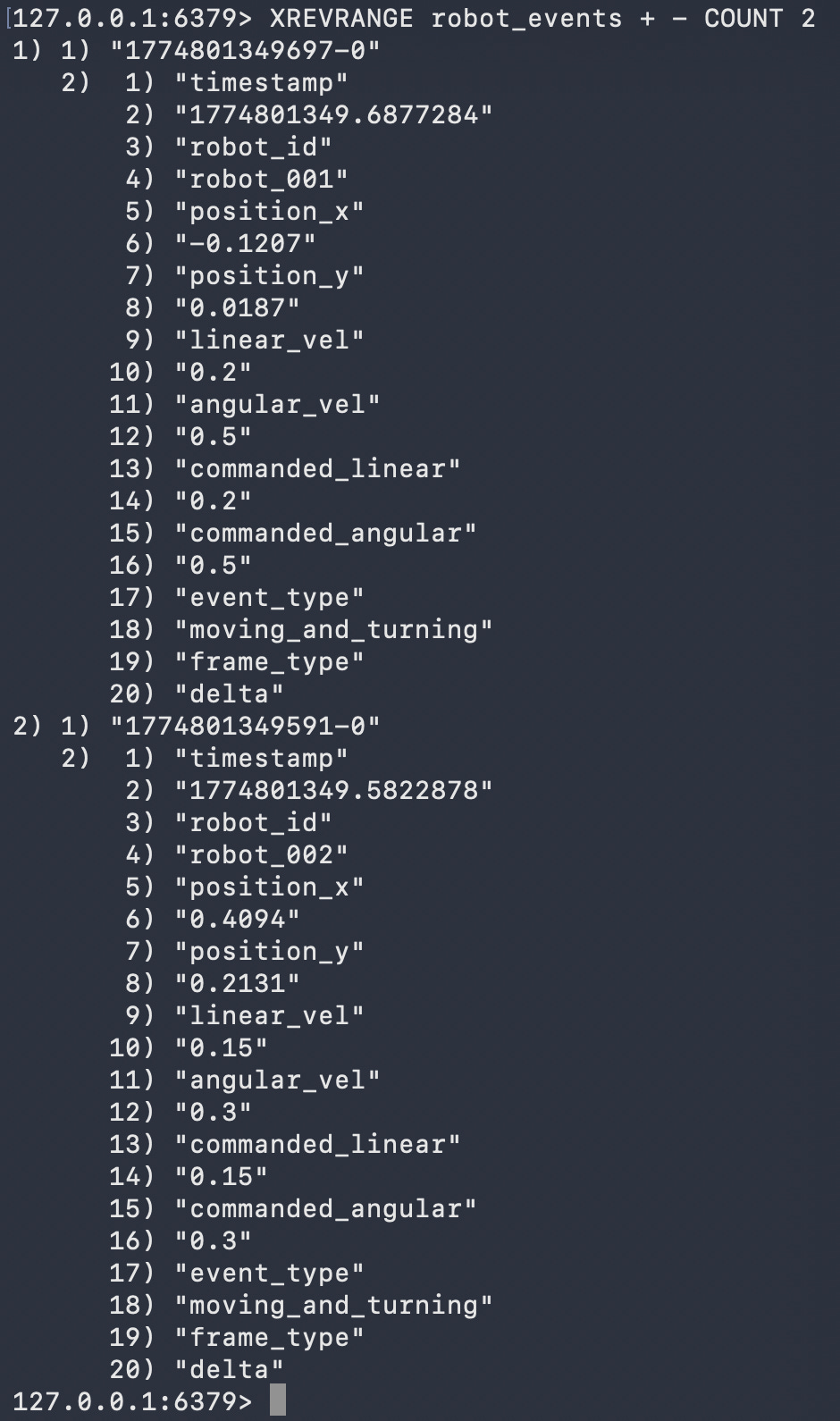

I implemented the same pattern in my own simulation, running a fleet of 3 Turtlebot3 robots over 24 hours. The gateway received 233,353 raw telemetry events and wrote only 522 to the knowledge graph, 204 anomalies, 114 keyframe snapshots, and 3 robot identity nodes. That is a 99.8% reduction. Every single anomaly was captured.

Every meaningful event is captured in the Redis Event Stream.

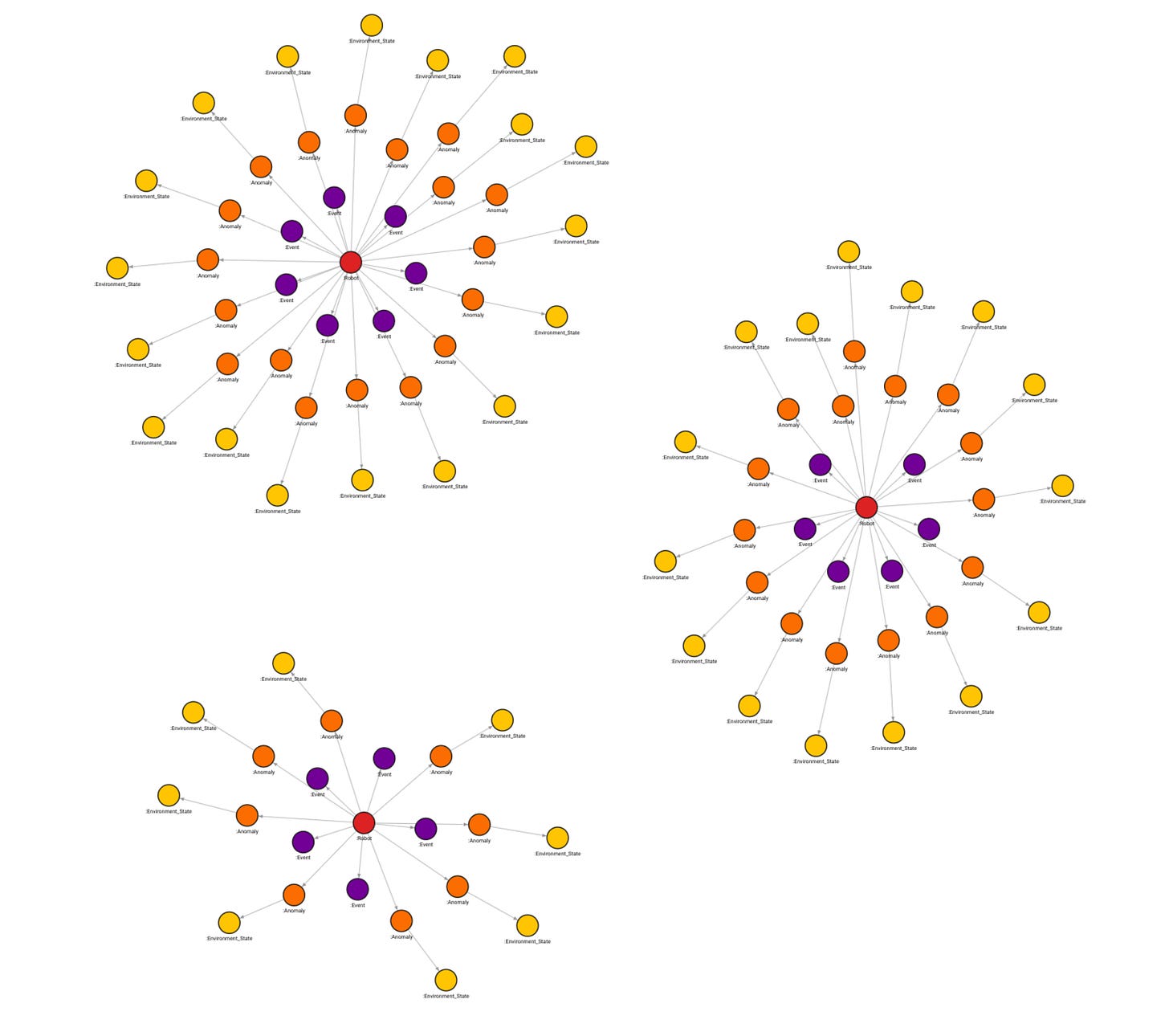

This is what the knowledge graph looks like after 25 hours of fleet operation. Three robots, each with its own anomaly history and position context, queryable in real time. Still compressed and manageable, and with zero context loss.

24 hours of fleet telemetry: 3 robots, 204 anomalies, geographic clusters visible.

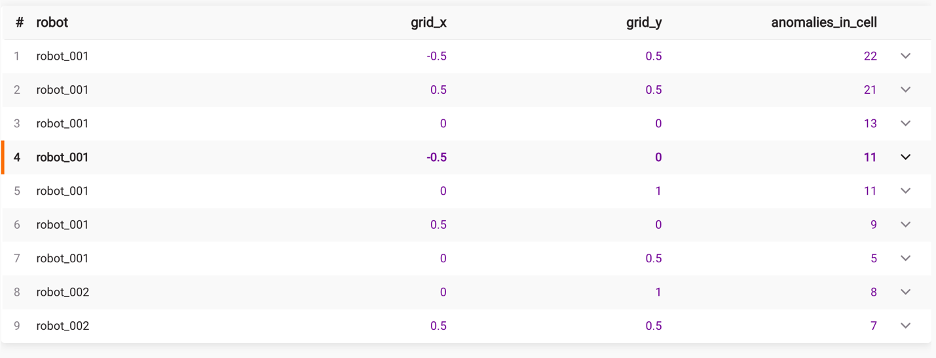

And because every anomaly carries a position, you can ask questions that raw replay can never answer. robot_001 kept stopping in two specific map regions. robot_002 stopped less frequently but in a tighter cluster. That is not a replay result; that is an operational context.

Geographic anomaly clustering across 3 robots, robot_001 has two distinct hotspot regions.

The hardware problem in physical AI is largely solved. Tools like Foxglove let you replay exactly what the robot saw. But a reply only answers the “what”. Nobody is answering the “why”. The gap between raw telemetry and operational understanding is where I think the real infrastructure work needs to happen and frankly where I am spending my time.

The delta-keyframe-heartbeat pattern itself is not new or novel. I have seen and solved this exact problem across 18 years of data engineering in Industrial IoT. What is new is bringing this thinking to Physical AI, combining it with a streaming pipeline and a knowledge graph, and making the robot’s edge compute node intelligent enough to decide what matters before it ever hits the network.

This is Series 2 of my Physical Context Fabric open-source project. Series 1 covered standing up ROS2 natively on a Raspberry Pi 5 and connecting it to Foxglove during GTC week. The next post covers the part I am most excited about, a natural language Q&A interface on top of the knowledge graph. Ask it why robot_001 kept stopping in the top left quadrant. Get an answer grounded entirely in what the robot actually did. No hallucination. No speculation. Purely context-driven.

If you are building a physical AI data infrastructure or just curious about the stack, the repo is fully open source and runs on a Raspberry Pi 5 and a MacBook Air. Come build with me.