The Corporate Brain: Eliminating Contextual Drift through Knowledge Graphs

Graph-powered AI as the Secret to a Seamless Digital Employee Experience (DEX)

The industry is currently obsessed with “Chat with your PDF” bots. But for an AI Architect, these are toys. In a high-precision enterprise environment, employee knowledge is a living, breathing network of dependencies.

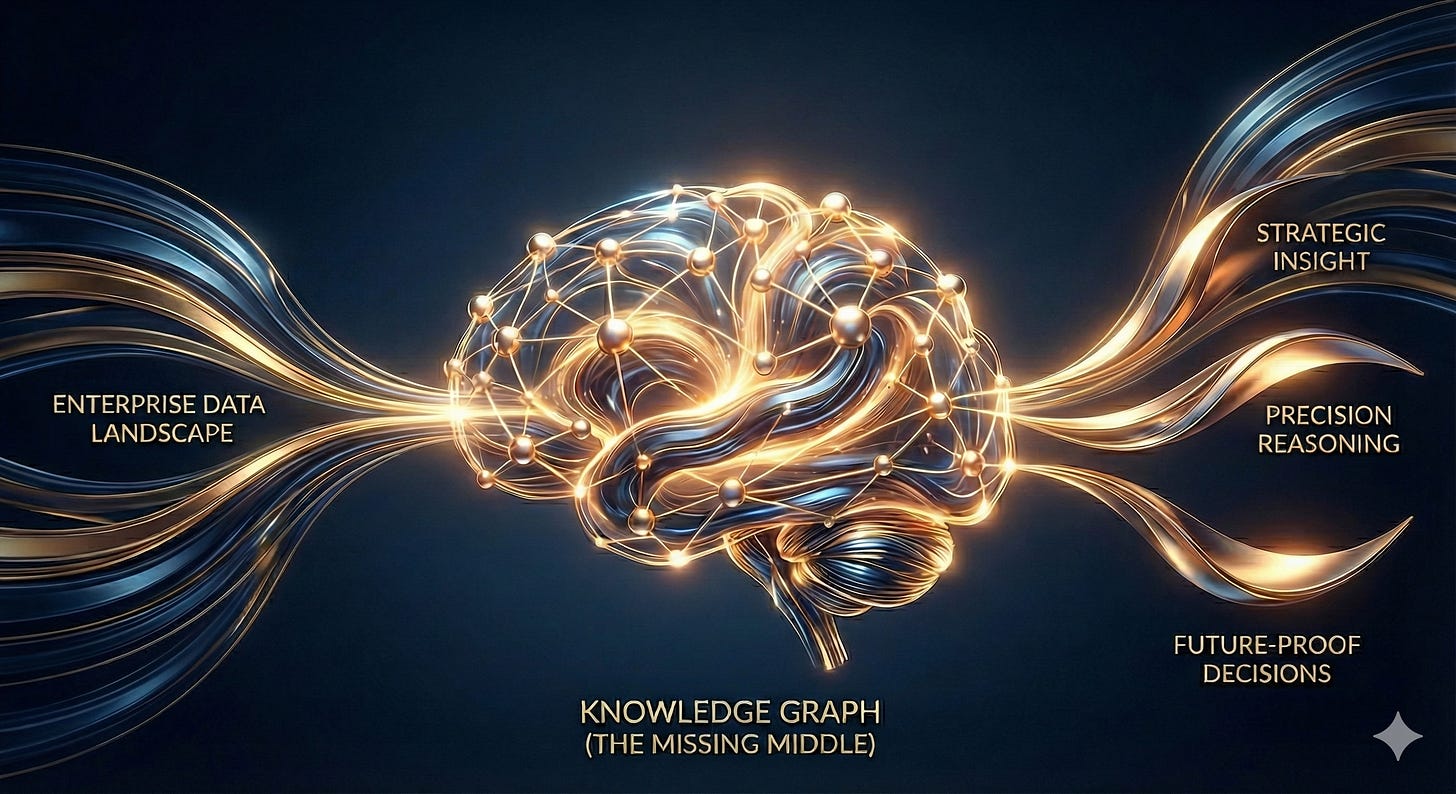

Most internal AI initiatives eventually hit a plateau characterized by Contextual Blindness, the inability of a model to see the logical connective tissue between disparate data silos. To solve the Digital Employee Experience (DEX) crisis, we don’t just need bigger models; we need a more sophisticated “memory.” We need the Knowledge Graph.

The DEX Crisis: The “Missing Middle” and Contextual Drift

What is DEX? DEX is the sum of every digital interaction, from the first line of code in GitHub to the final status update in Jira. To truly optimize this experience, we must bridge the 'Semantic Gap' between Slack, Jira, and GitHub once and for all. In high-stakes technical environments, DEX isn’t a luxury; it’s a mission-critical moat.

Why Current AI Fails DEX: Most organizations are currently distracted by “Linear RAG.” They take a Vector Database and treat knowledge as isolated points in space, rather than a sequence of events.

This creates the “Missing Middle”, the layer between your raw data silos and the LLM’s reasoning. This is where Contextual Drift sets in. As data evolves across Slack, Jira, and GitHub, a vector-only system loses the “thread” of truth:

Relational Fragility: Vector search finds fragments that look similar but cannot verify if they are logically related.

Contextual Blindness: The model might see a “GPU error” in a document but remains blind to the fact that a specific developer is currently fixing that exact error in a private branch.

The Provenance Gap: Without a graph, an LLM cannot provide an audit trail of how it connected a Slack complaint to a technical resolution.

The Solution: GraphRAG & The Semantic Bridge

To solve Contextual Blindness, we must decouple the Logic (the LLM) from the State (the Knowledge Graph). By using ArangoDB as our reasoning substrate, we solve the two most difficult technical hurdles in DEX:

1. The Multi-Hop Problem: Bridging Slack to Jira

Most internal questions are “multi-hop” by nature.

The Query: “What was the resolution of the sensor anomaly discussed in Slack last Tuesday by the lead on Project Orion?”

The Problem: Linear RAG might find the Slack thread or the Jira ticket, but it cannot logically traverse the link between them.

The Semantic Bridge: In a Graph database like ArangoDB, we define the “verbs” that connect these entities, allowing the agent to “walk” from a conversation to a technical fix deterministically.

2. Entity Resolution: The Identity Handshake

Corporate data is a “siloed aliases” nightmare.

Slack:

@Sreeram| Jira:snudurupati| GitHub:sreeram-devIf your system doesn’t know these are the same physical person, your “Corporate Brain” has multiple personalities. We solve this through Entity Resolution. Mapping disparate aliases to a unique Global Entity ID in a graph database like ArangoDB before the data ever hits the graph.

Architecting the Corporate Brain: A 10-Step Blueprint

Here is the 10-step path from fragmented data to an autonomous reasoning engine using ArangoDB and LangGraph.

1. The Secure Substrate

Establishing a secure, authenticated connection to our ArangoDB instance. Governance is the first priority; the "Brain" must only access authorized nodes.

2. Topological Definition

Defining the Topology of Work. We create specific collections for Employees, JiraTickets, and Commits, turning “static rows” into “active entities.”

3. Graph Logic Initialization

Initializing the schema and defining the Semantic Bridges, the “Edge Collections” (like HAS_IDENTITY or ADDRESSES) that serve as neural pathways.

4. Seeding the “Project Orion” Loop

Creating a cross-domain trace: a Slack message references a project, which links to a Jira ticket, which links to a GitHub commit.

5. Entity Resolution (ER)

Implementing the “Identity Handshake” to normalize siloed aliases into a unified graph node. This is the foundation of cross-domain intelligence.

6. Multi-Hop Pathfinder (AQL)

Executing Deterministic Reasoning using ArangoDB Query Language (AQL). We ask the graph to “walk” the path from a handle to a project, then to a task, and finally to the code.

7. Orchestration via LangGraph

Defining a stateful agent that records every step in a “Glass Box” trace, making the AI’s reasoning fully auditable and transparent.

8. Contextual Resolution

Upgrading the agent to handle Discovery Queries. If a user asks about “GPU issues,” the agent resolves the Project Context and navigates the graph to find the expert assigned to the relevant task.

9. Wiring the Agentic Workflow

Compiling the nodes to ensure the agent must resolve an identity and traverse the semantic bridges before drawing conclusions.

10. The Gap Detector (The Proactive Architect)

The final polish: A “Critic” node that proactively identifies Stalled Work, identifying Jira tickets that have no linked GitHub commits.

The Business Case for Graph-Powered AI

By leveraging a Knowledge Graph substrate like ArangoDB, we provide the enterprise with three massive business value propositions:

Explainable AI (XAI): We move away from “black box” hallucinations. Every answer includes a provenance trace, showing the exact AQL traversal that connected the intent to the action.

Operational Auditability: The ability to “detect gaps” between management tools and technical reality provides leadership with a real-time risk-assessment engine.

Organizational Velocity: By bridging silos through Entity Resolution, we eliminate the “Context Tax” that costs engineers hours of productivity every week.

For organizations where data is a strategic asset and precision is a requirement, the Knowledge Graph isn’t just an option; it’s the engine of the modern Digital Employee Experience.

Get the full code here in the repo: snudurupati/agentic-ai-architect

Next Week: We move from architecture to a high-stakes competitive analysis. We are setting up a definitive "Bake-Off" between two of the most discussed strategies in the enterprise AI space: Continued Pre-Training vs. Knowledge Graphs (GraphRAG).

While some argue that baking internal knowledge directly into model weights is the path to "true" intelligence, we will examine the cold realities of cost, latency, and the inevitable "Drift" that occurs when static weights meet a dynamic codebase. We’ll look at the trade-offs of both paradigms to see how they might coexist or where one might hold the edge in a mission-critical production environment, while the other is an expensive dead end.